The workflow operating system for retail content at scale.

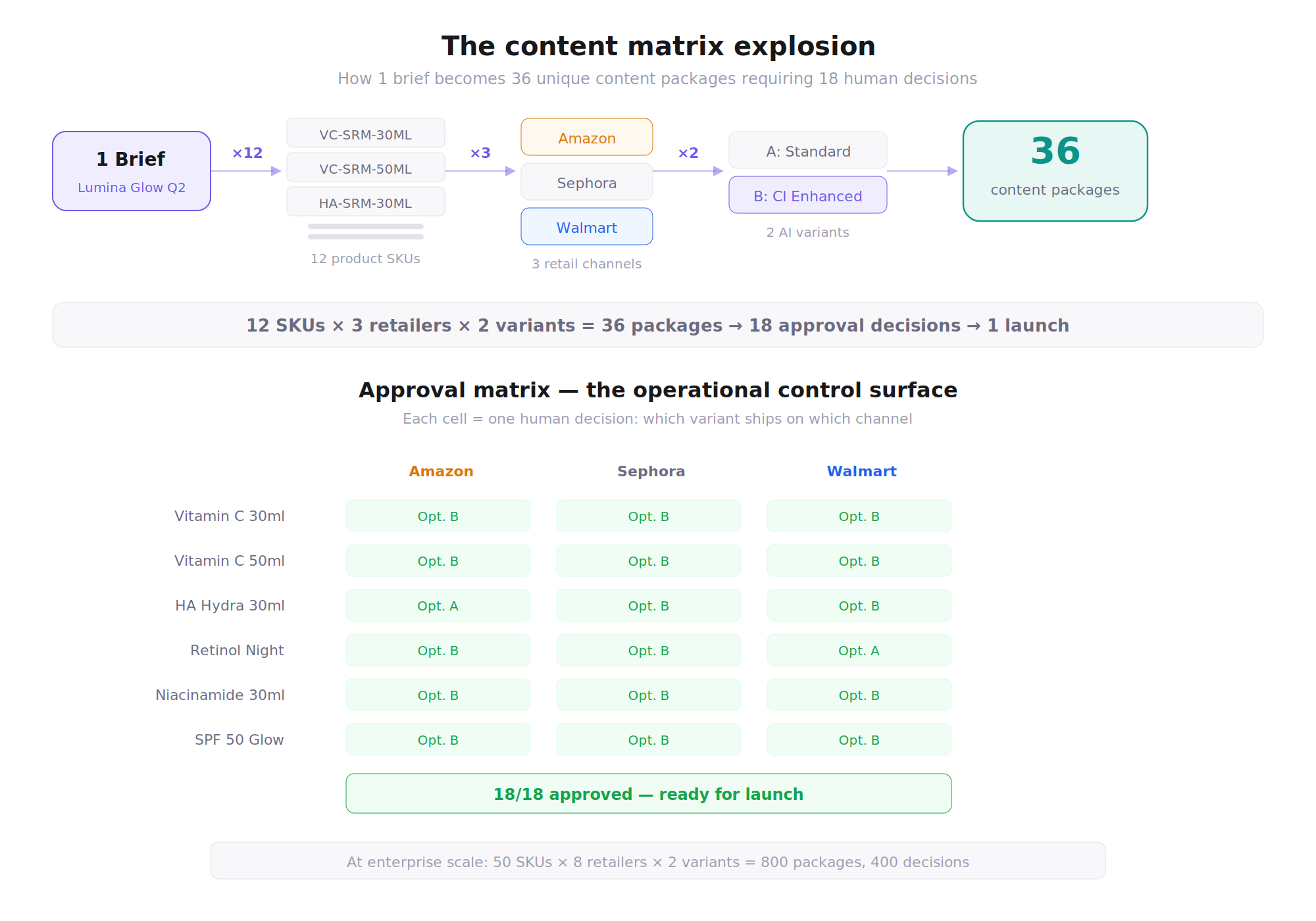

One brief in, 36 retailer-ready content packages out. AI accelerates the work. Humans own every decision that ships.

Retail content operations were slow, fragmented and expensive. Every product launch meant weeks of manual coordination across SKUs, retailers and markets, with approvals scattered across email threads and spreadsheets. I designed ShelfFlow: a structured workflow platform that turns a single product brief into retailer-ready content through AI-assisted generation, gated human approvals and multi-retailer deployment.

I led product design end-to-end: workflow architecture, state machine design, decision authority mapping, AI orchestration boundaries and the full operator interface.

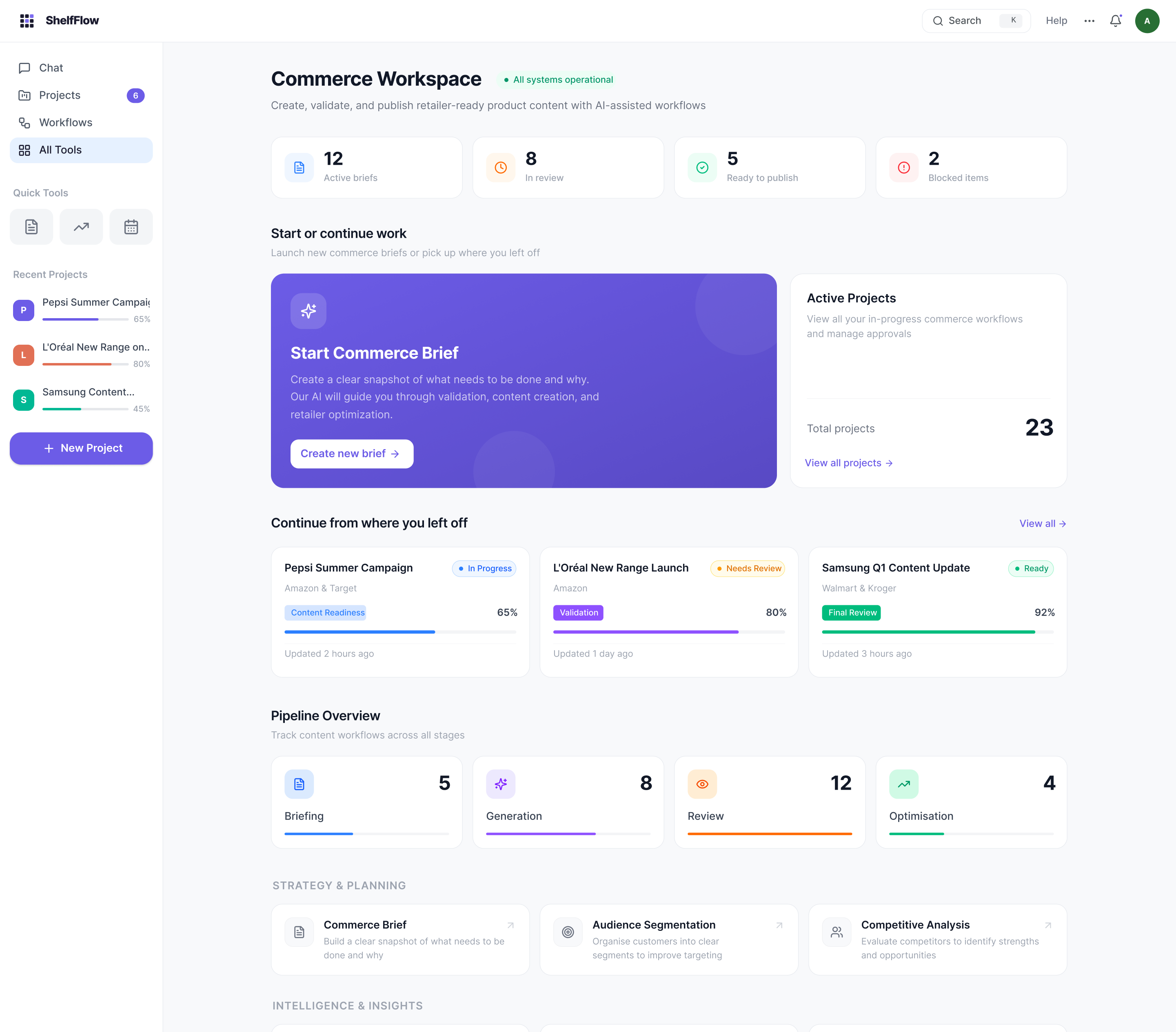

Commerce Workspace. The single surface where operators track active briefs, pipeline status and AI agent activity across all launches.

Every product launch triggered the same cascade of manual work. Teams weren't failing at content creation. They were drowning in coordination.

A single product launch at an enterprise CPG company generates content across multiple SKUs, multiple retailers, and multiple markets. Each retailer has different spec requirements, character limits, image guidelines, and compliance rules. Amazon needs bullet-point formats within strict character counts. Sephora requires ingredient narratives in a different tone. Walmart has its own taxonomy and claim restrictions.

The content team was producing all of this by hand. One brief would take weeks to turn into retailer-ready packages. Errors compounded silently. Versioning happened in file names. Approvals moved through email threads that no one could reconstruct. When a launch slipped, no one could point to where the breakdown actually happened.

This wasn't a creativity problem or a tooling gap. It was a coordination overhead problem that got exponentially worse with every new SKU, retailer, or market added to the matrix.

- 6 SKUs × 6 retailers = 36 content packages from a single product brief. Each with unique spec requirements, character limits, and compliance constraints.

- No single source of truth. Content lived in spreadsheets, email threads, and shared drives. Version conflicts were constant. Teams regularly shipped outdated copy because they couldn't tell which file was current.

- Approval chains were invisible. No one knew who had approved what, or what was still pending. A single missing sign-off could block an entire launch, and the team often wouldn't discover it until the deadline.

- Multi-market duplication. Teams in different regions recreated the same content independently, introducing inconsistencies across markets that took days to reconcile.

- AI tools existed but weren't trusted. Teams experimented with generative AI but had no governance model. Without clear boundaries for what AI could and couldn't decide, nothing AI-generated shipped without full manual rewriting.

The real bottleneck wasn't content quality. It was decision architecture: who approves what, when, and with what authority.

Every enterprise content workflow has the same hidden structure: a series of decision gates where someone must say yes or no before work moves forward. The teams I studied didn't lack tools. They lacked a system that made decision authority explicit and enforceable.

Faster drafting wouldn't solve this. The team could generate copy in hours, but approvals took weeks because accountability was distributed across email threads, Slack messages and informal handoffs. No one could answer "who needs to sign off on this?" without asking three other people first.

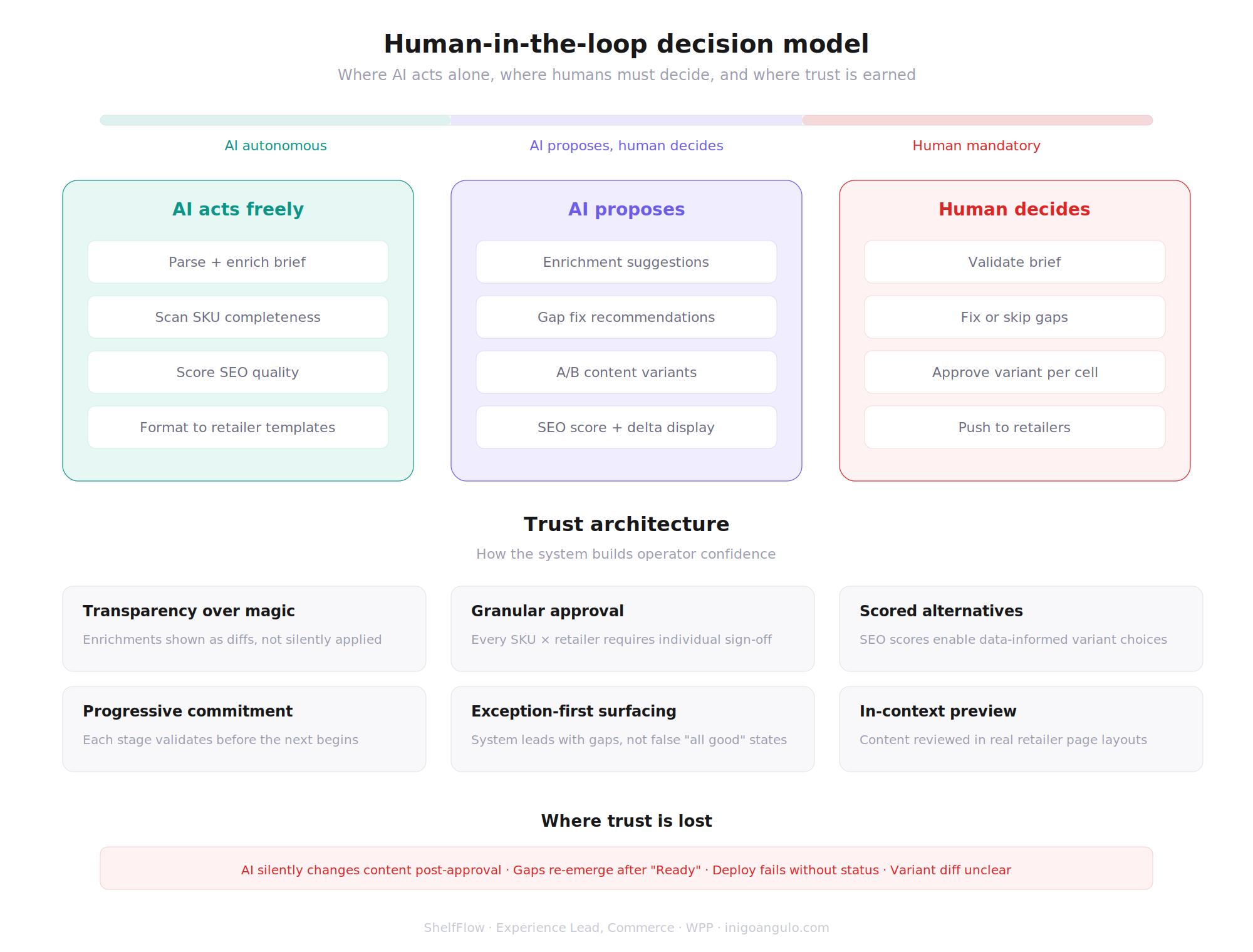

AI could accelerate the generation phase, but only if humans retained clear authority over what ships. The strategic insight: design the decision architecture first, then embed AI as an accelerator within it. Not the other way around.

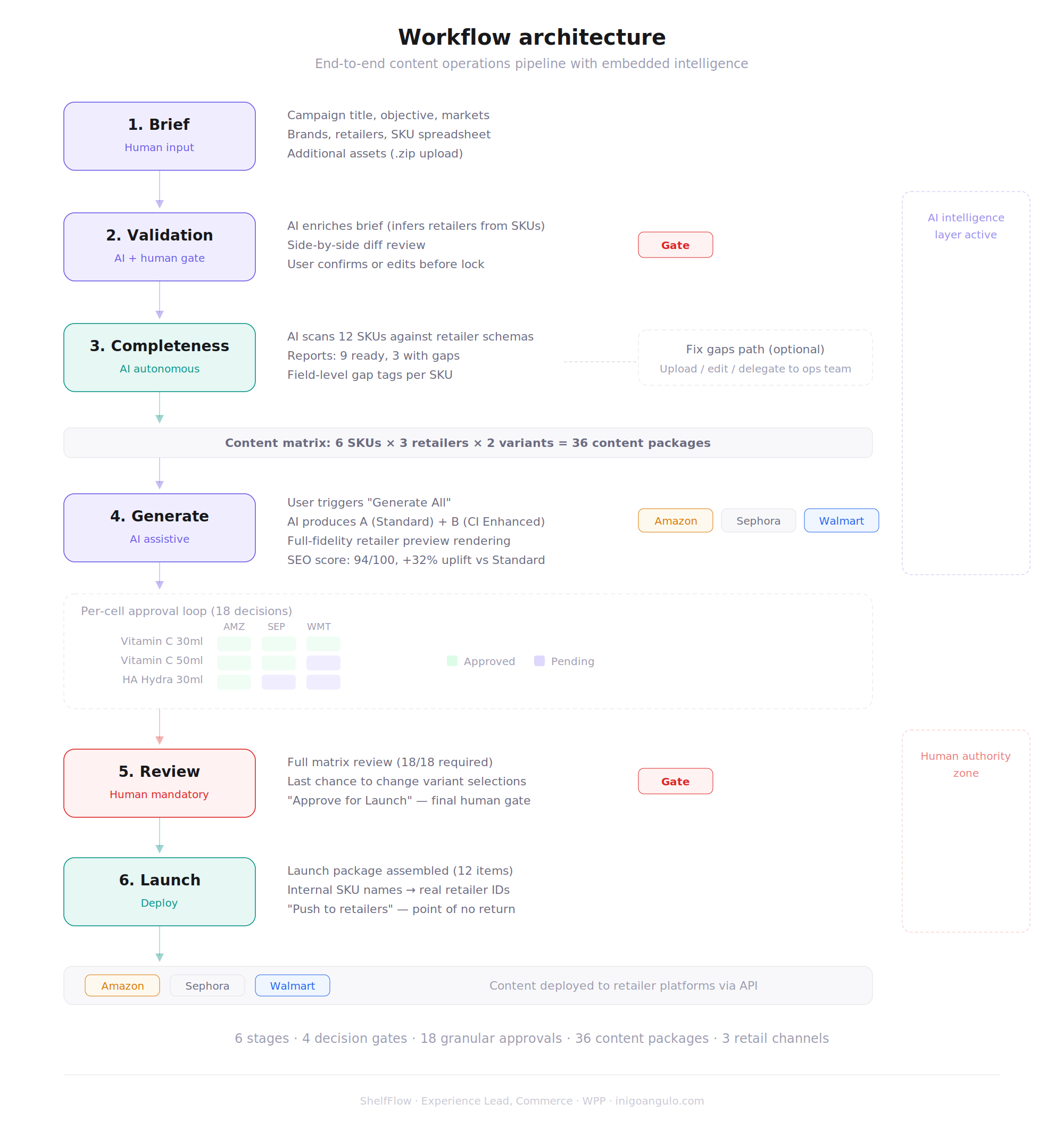

One brief in, 36 retailer-ready packages out. Six stages, four decision gates, zero ambiguity about who owns what.

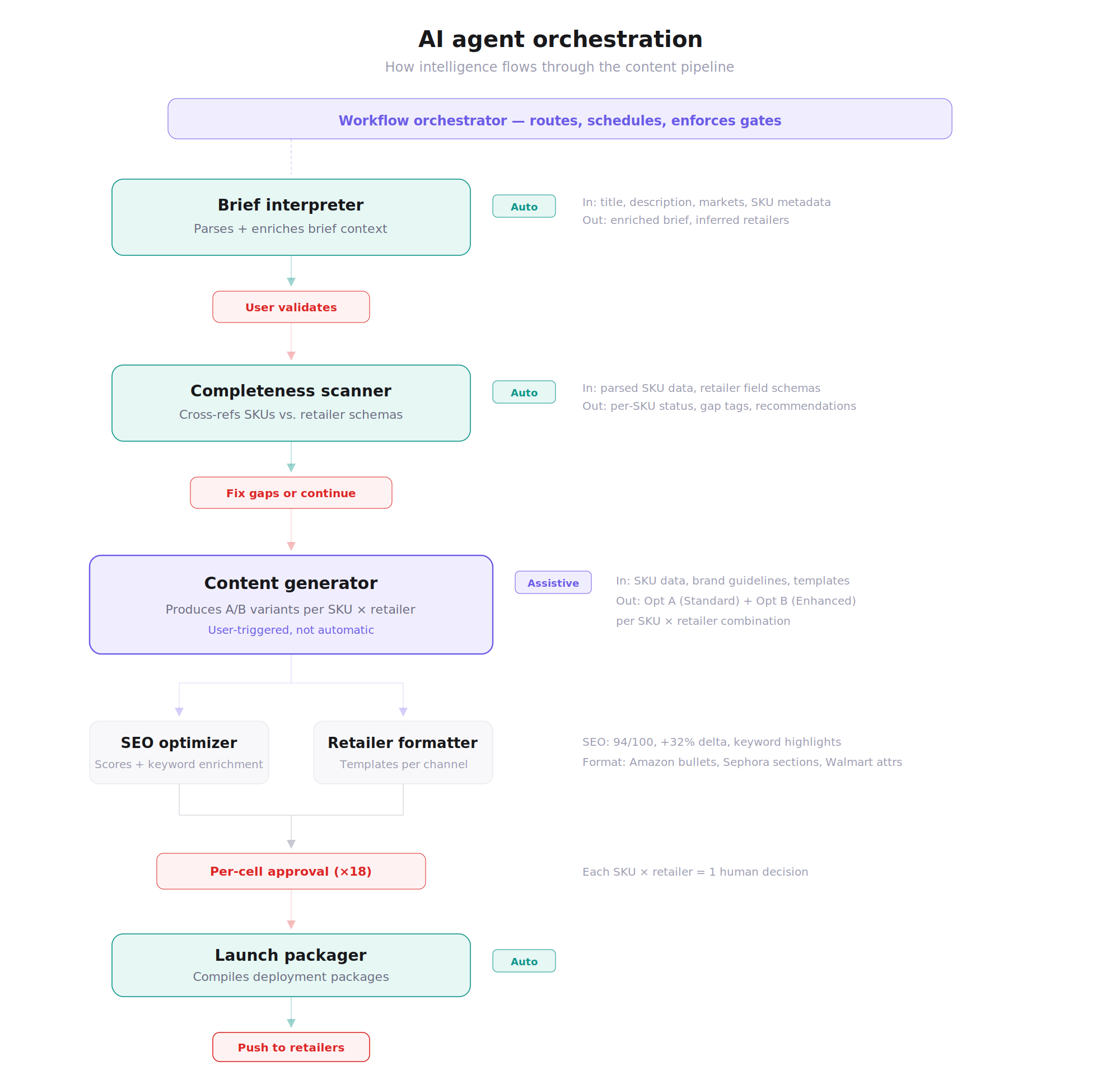

ShelfFlow is a structured workflow platform that takes a single product brief and coordinates the entire content production pipeline across SKUs, retailers, and markets. The system is built around 6 workflow stages with 4 mandatory decision gates where human approval is required before content advances.

The architecture follows a deliberate logic: separate generation from adaptation, separate adaptation from approval, and make every transition between phases explicit and auditable. This staging exists because each phase has different owners, different risks, and different criteria for "done".

Six-stage pipeline

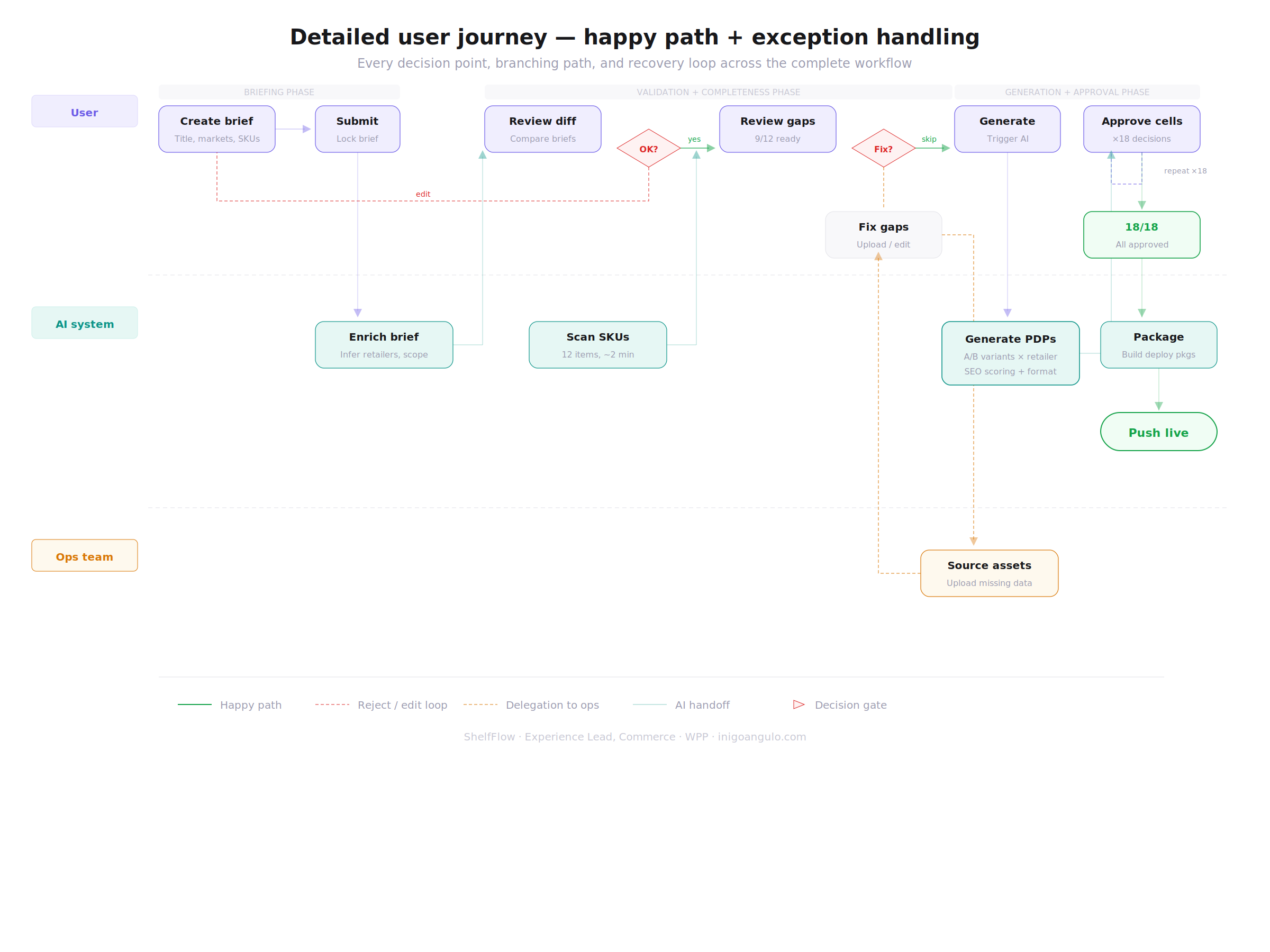

End-to-end workflow architecture. Six stages, four decision gates. AI operates between gates. Humans own every gate transition.

The operator interface: one screen to track 36 packages across 6 stages. No drilling, no email, no ambiguity.

The core interface is a workflow board where operators see every content package, its current stage, who owns it, and what's blocking it. Each package card surfaces its SKU, target retailer, current gate status, and AI confidence score at a glance.

The design goal was specific: at any moment, an operator should be able to answer "what's blocking this launch?" in under 5 seconds. That constraint shaped everything from information density to colour-coding to the decision to surface time-in-stage on every card. When you're managing 36 parallel packages, the interface has to surface problems, not require you to hunt for them.

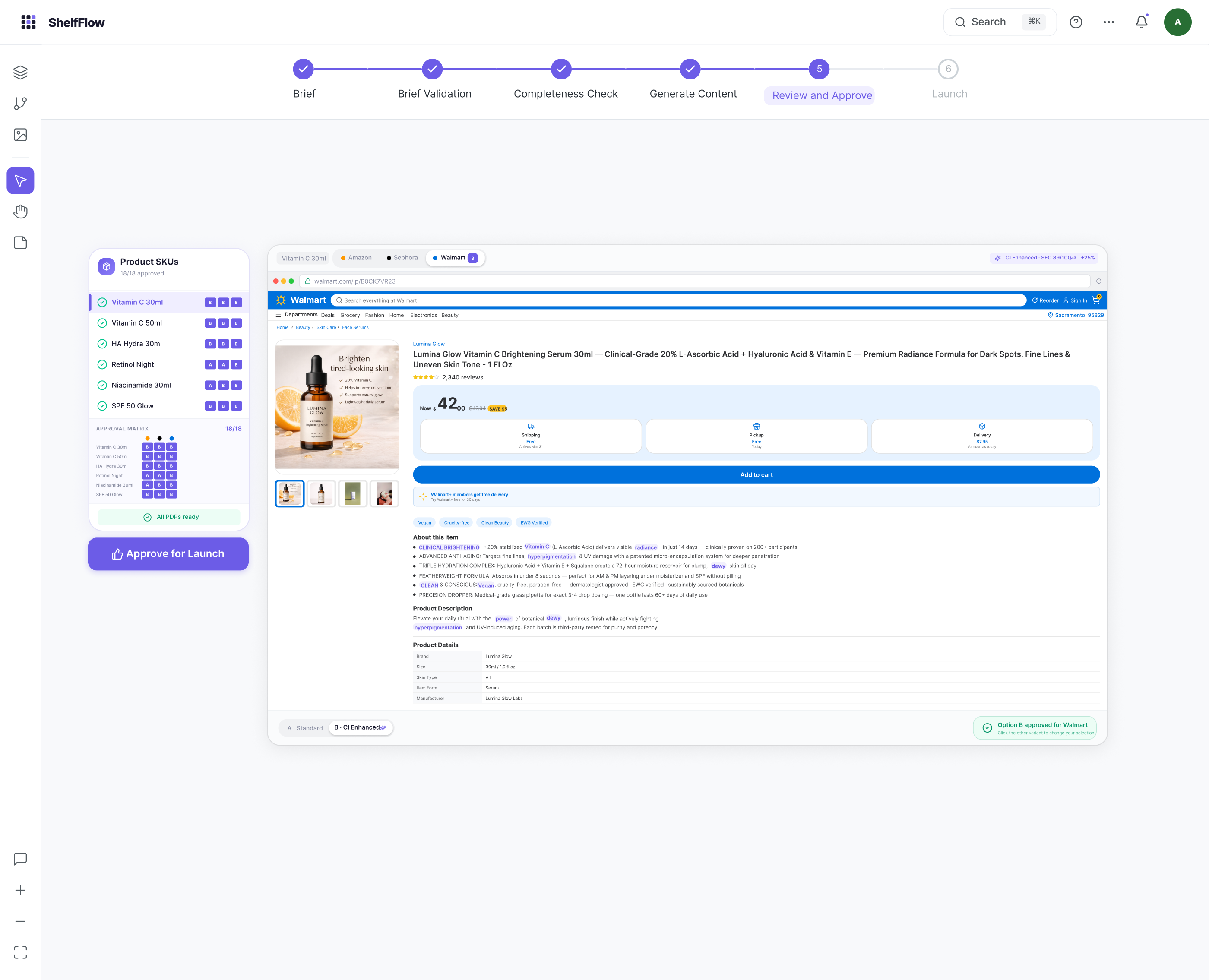

- Package cards show SKU, retailer, stage, owner, gate status, and time-in-stage. Color-coded by urgency.

- Batch operations let operators approve, reject, or reassign multiple packages at once. Critical when managing 36 packages per launch.

- Filters by retailer, SKU, or gate status so operators can focus on what needs attention now.

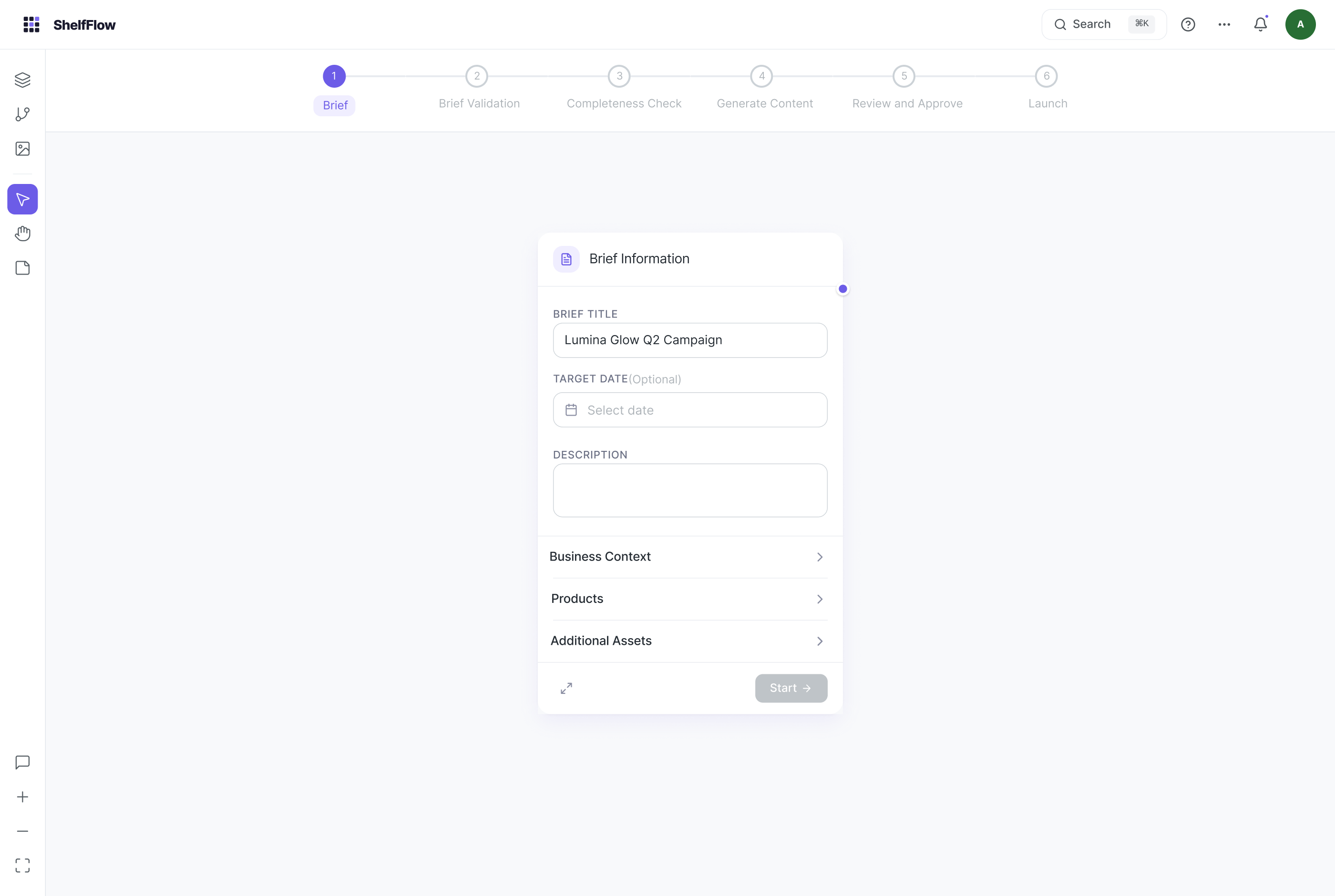

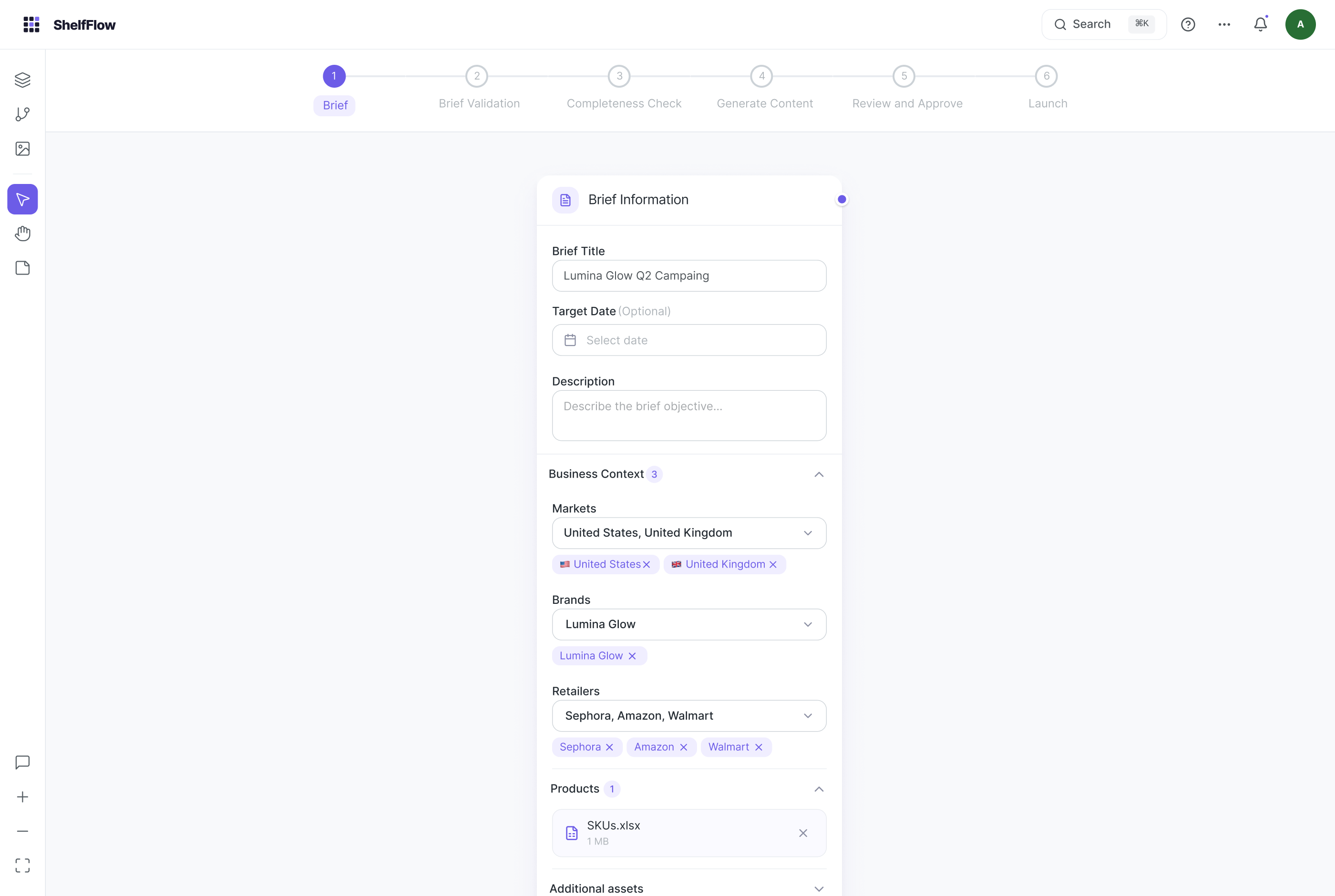

S1 · Brief intake / empty state

S1 · Brief intake / empty state S1 · Brief filled / markets, brands, retailers

S1 · Brief filled / markets, brands, retailers

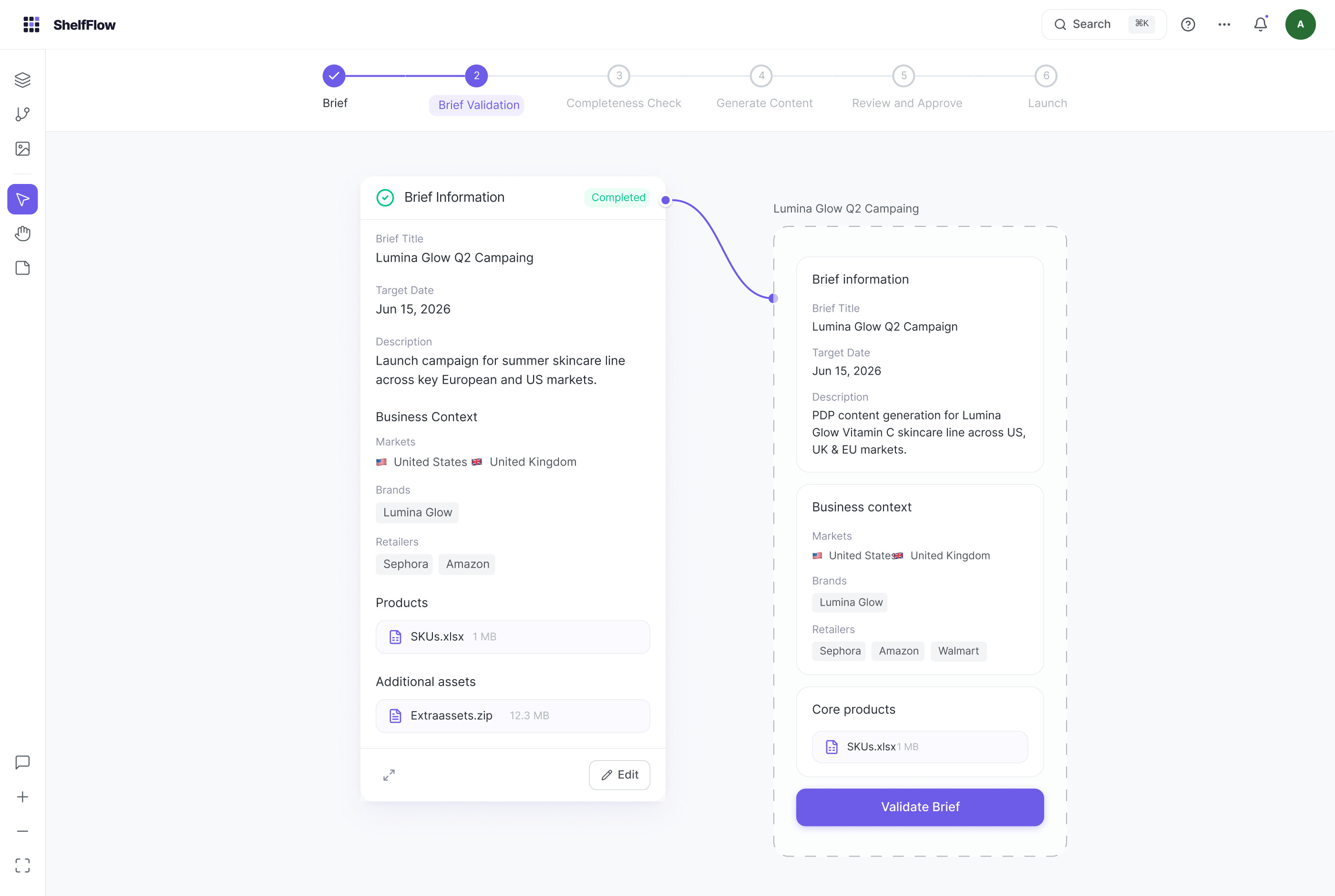

Gate 1: Brief validation. Side-by-side diff showing user brief vs. AI-enriched brief. Human confirms or edits before lock.

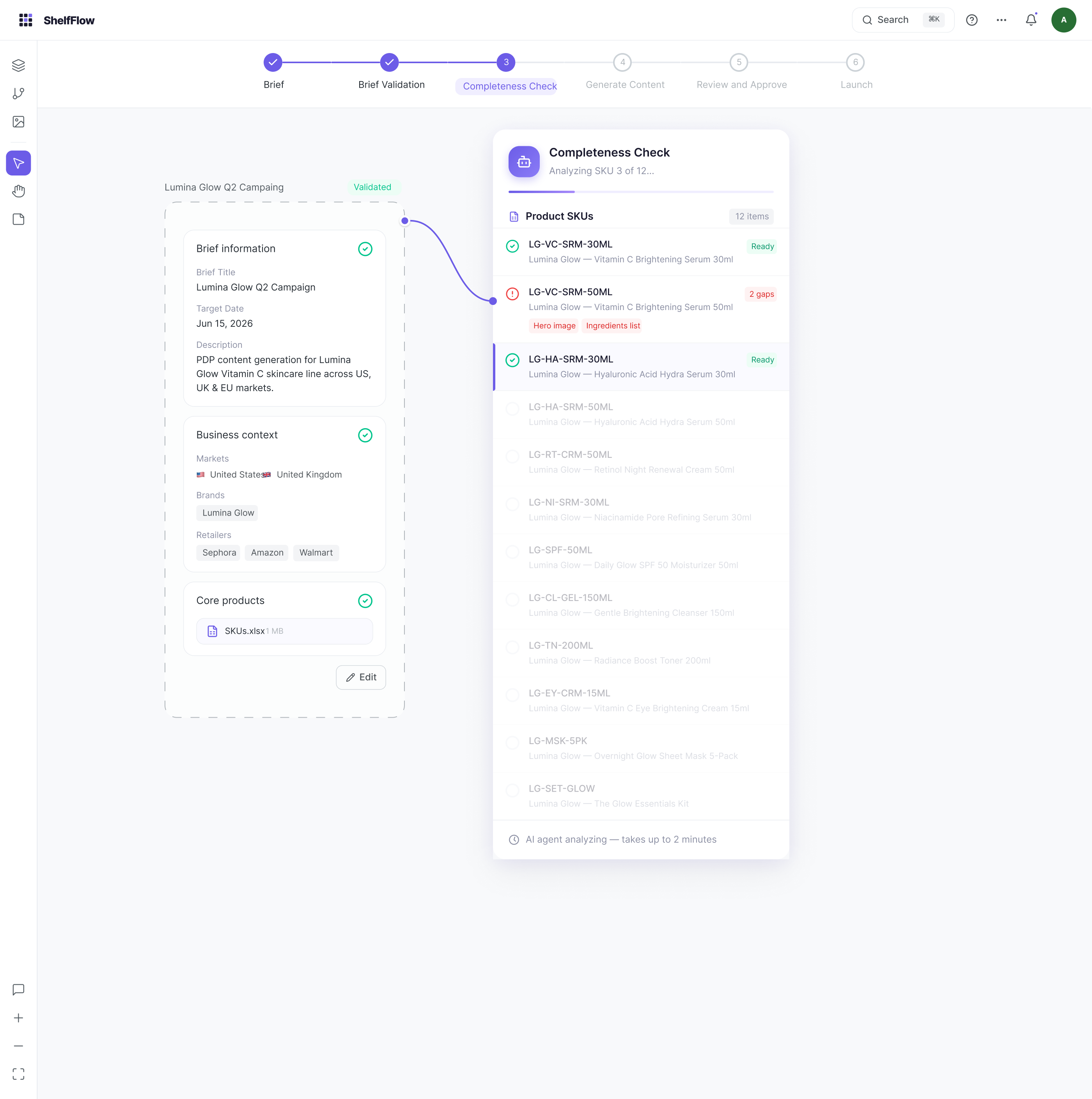

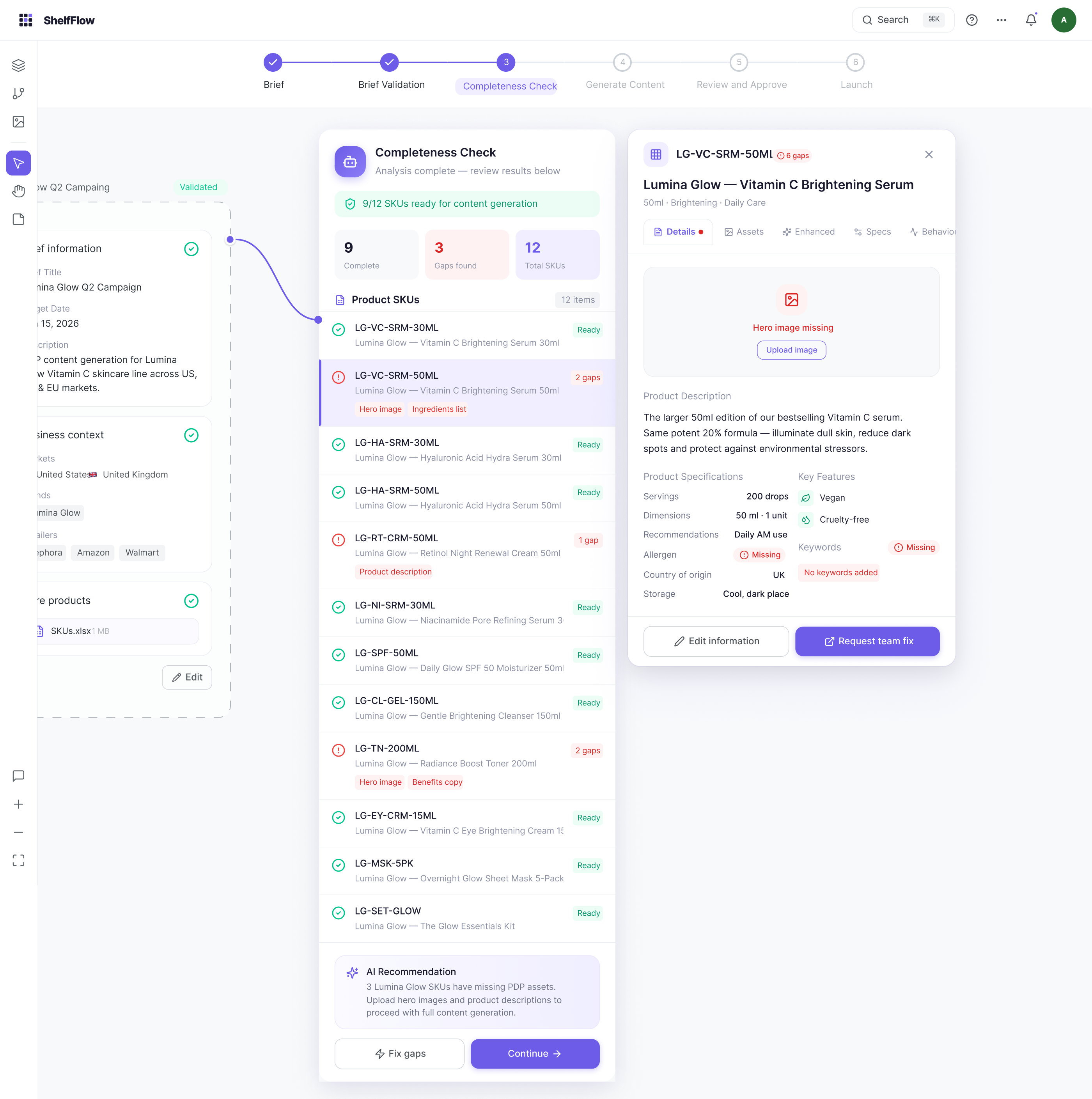

S3 · Completeness scanning / AI analysing SKUs

S3 · Completeness scanning / AI analysing SKUs S3 · Results / 9 ready, 3 with gaps

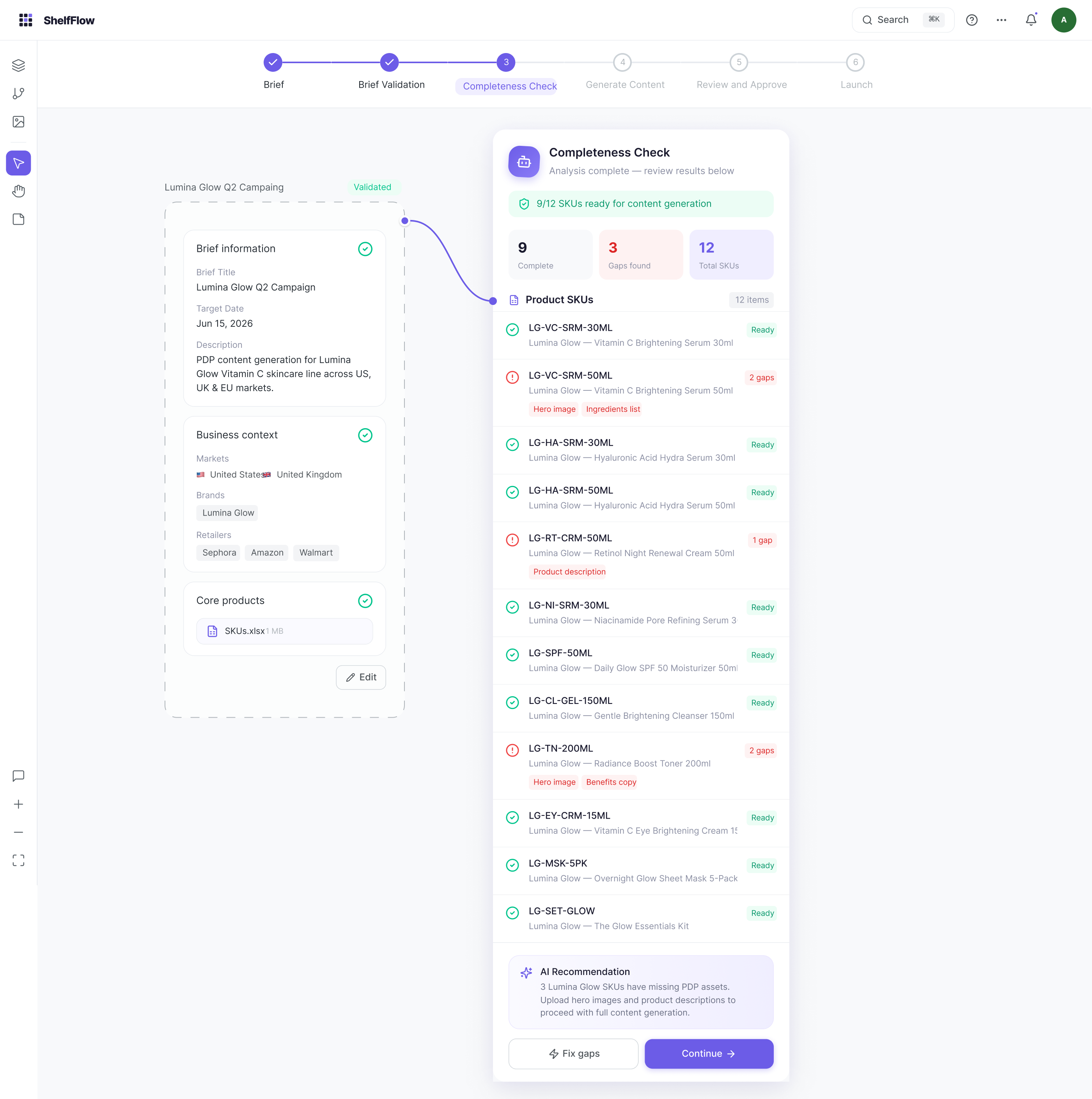

S3 · Results / 9 ready, 3 with gaps

SKU detail panel. Field-level gap detection with AI recommendations. One-click delegation to the ops team for resolution before the package can advance.

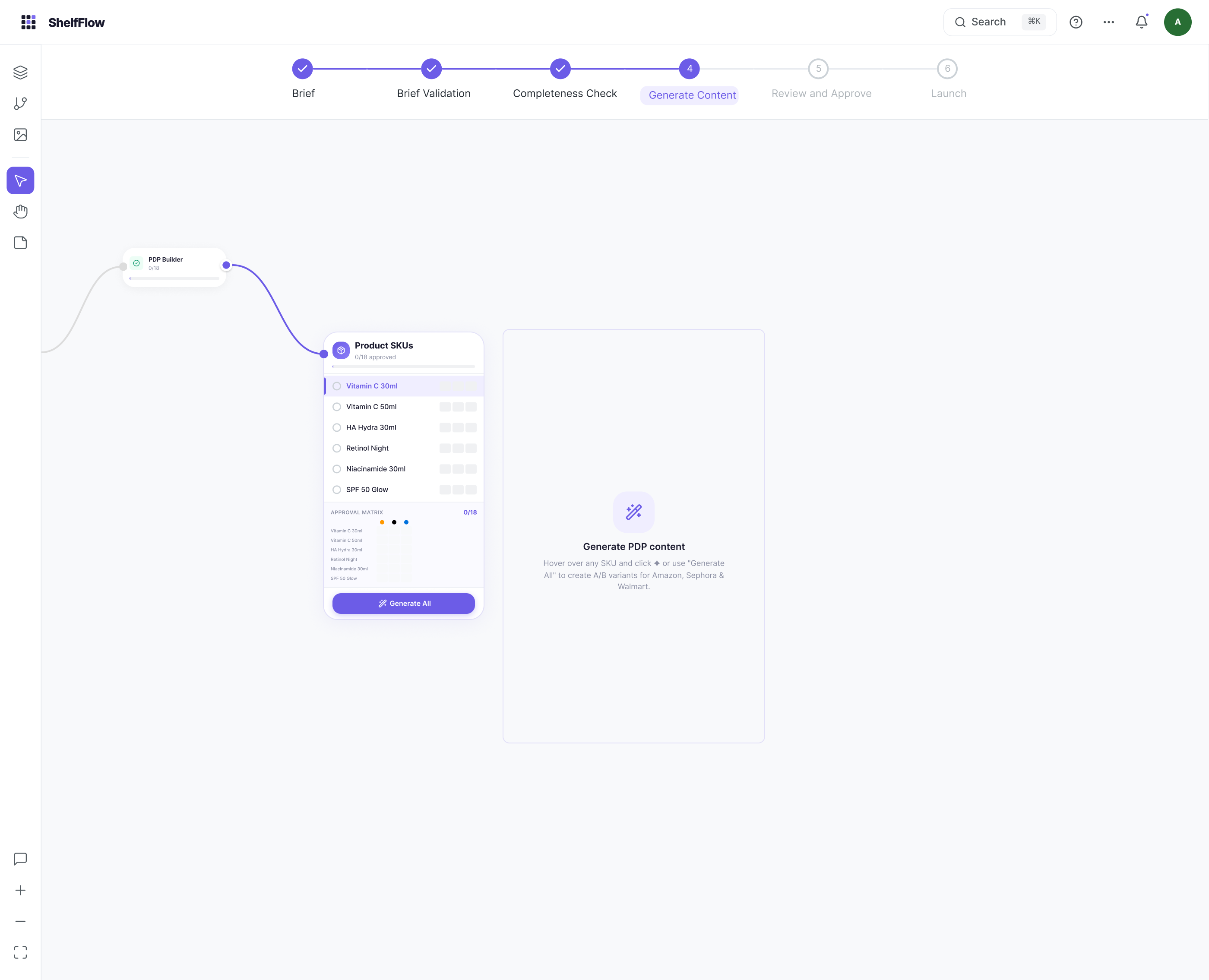

S4 · Generate / select SKUs, trigger AI

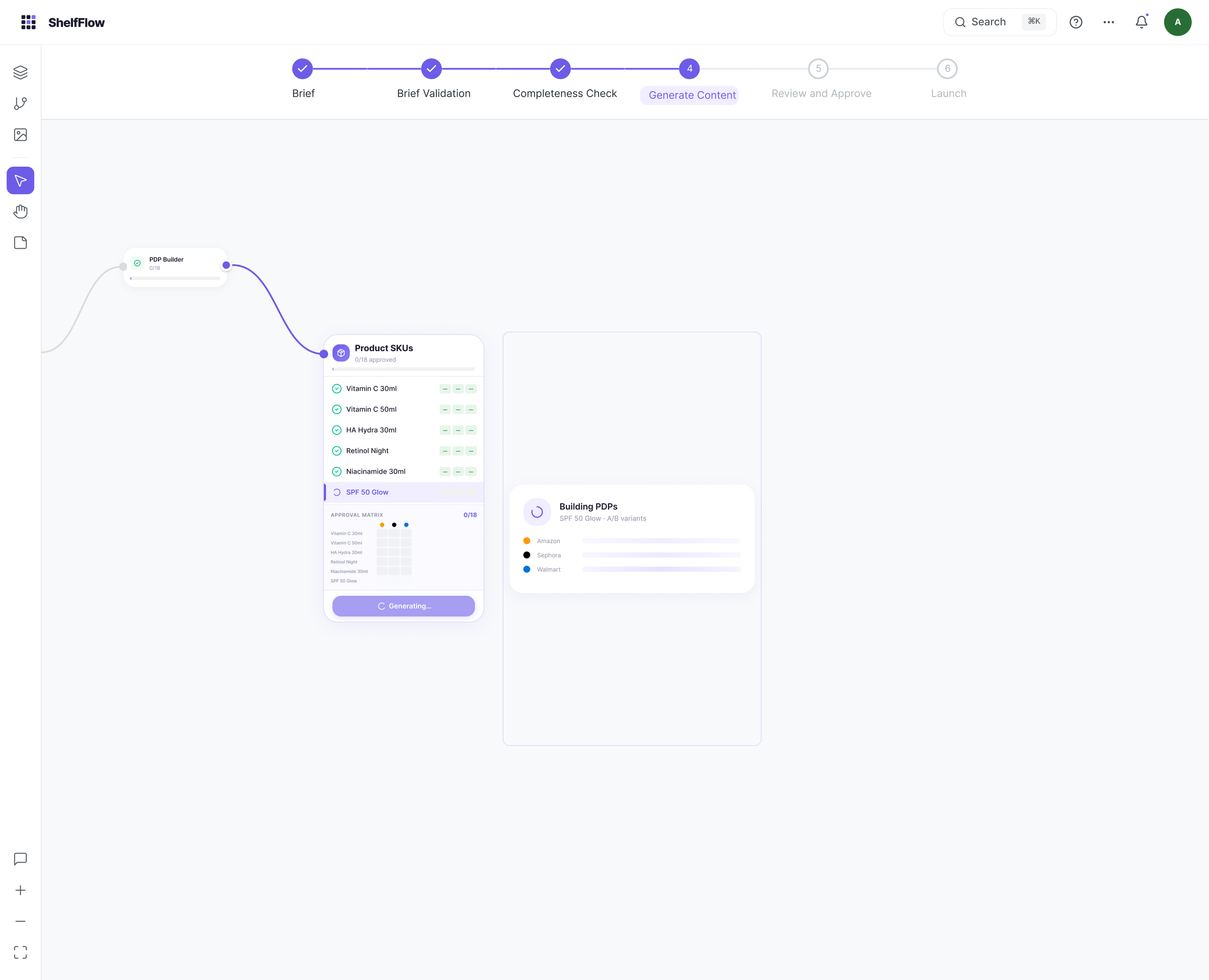

S4 · Generate / select SKUs, trigger AI S4 · Generating / A/B variants per retailer

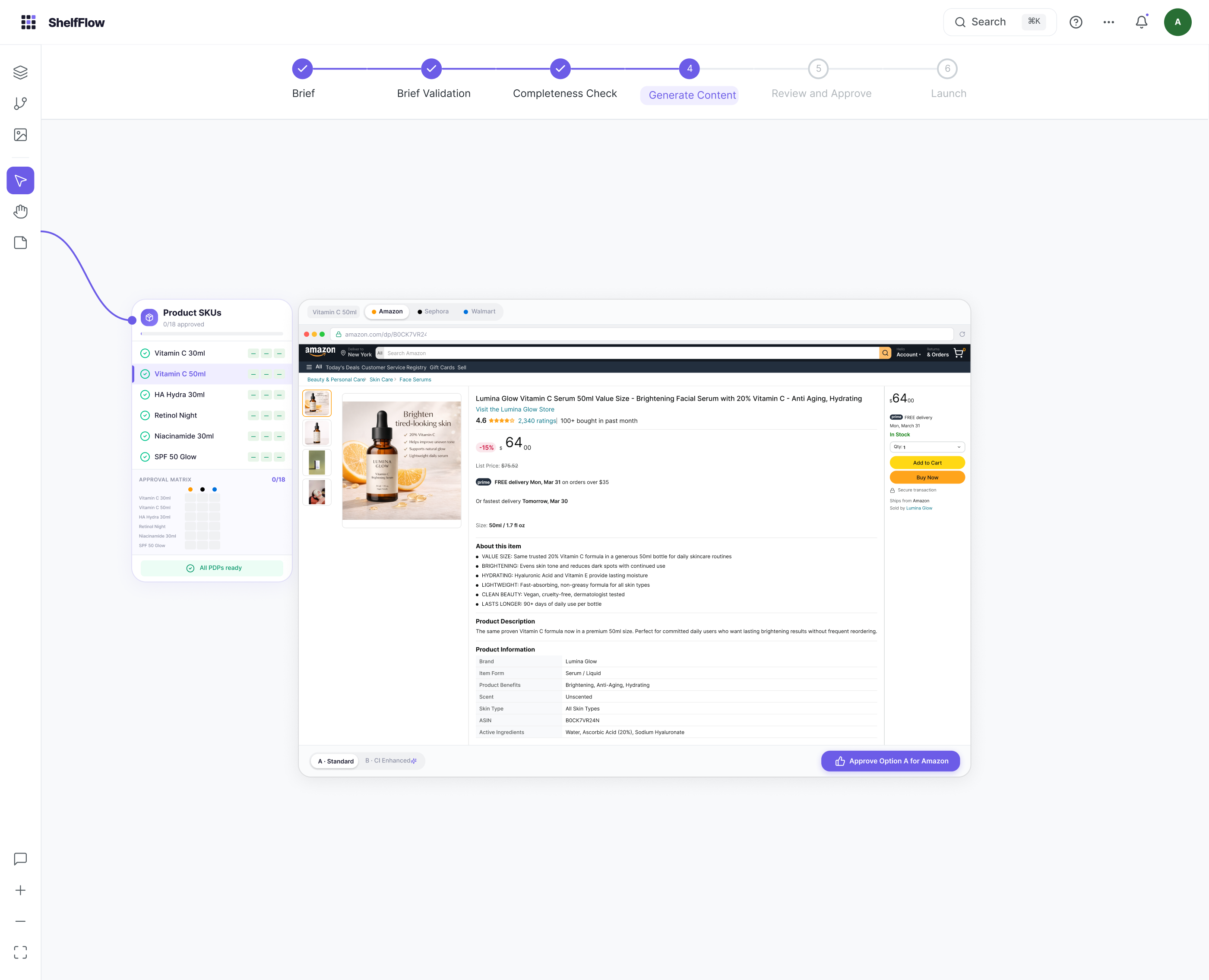

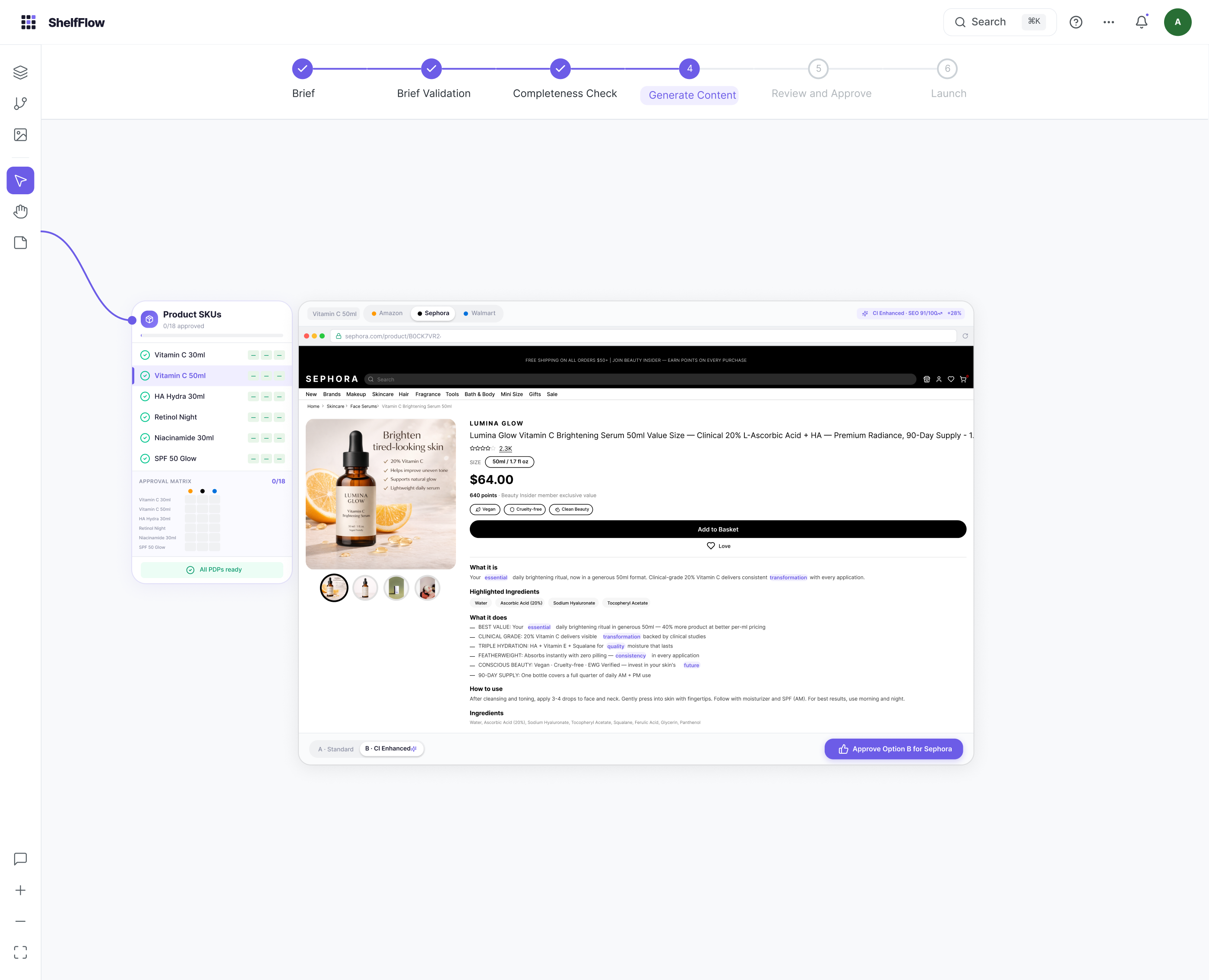

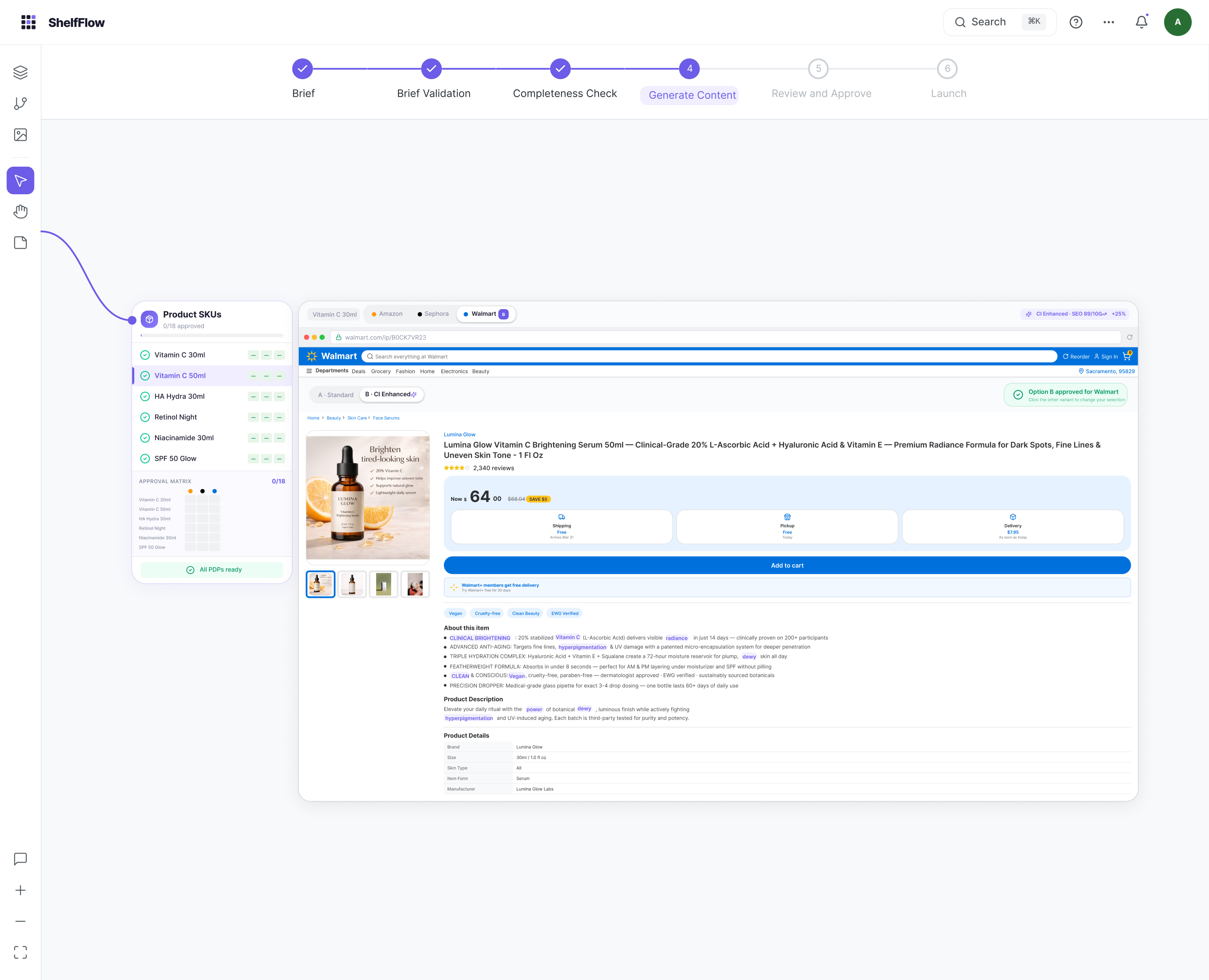

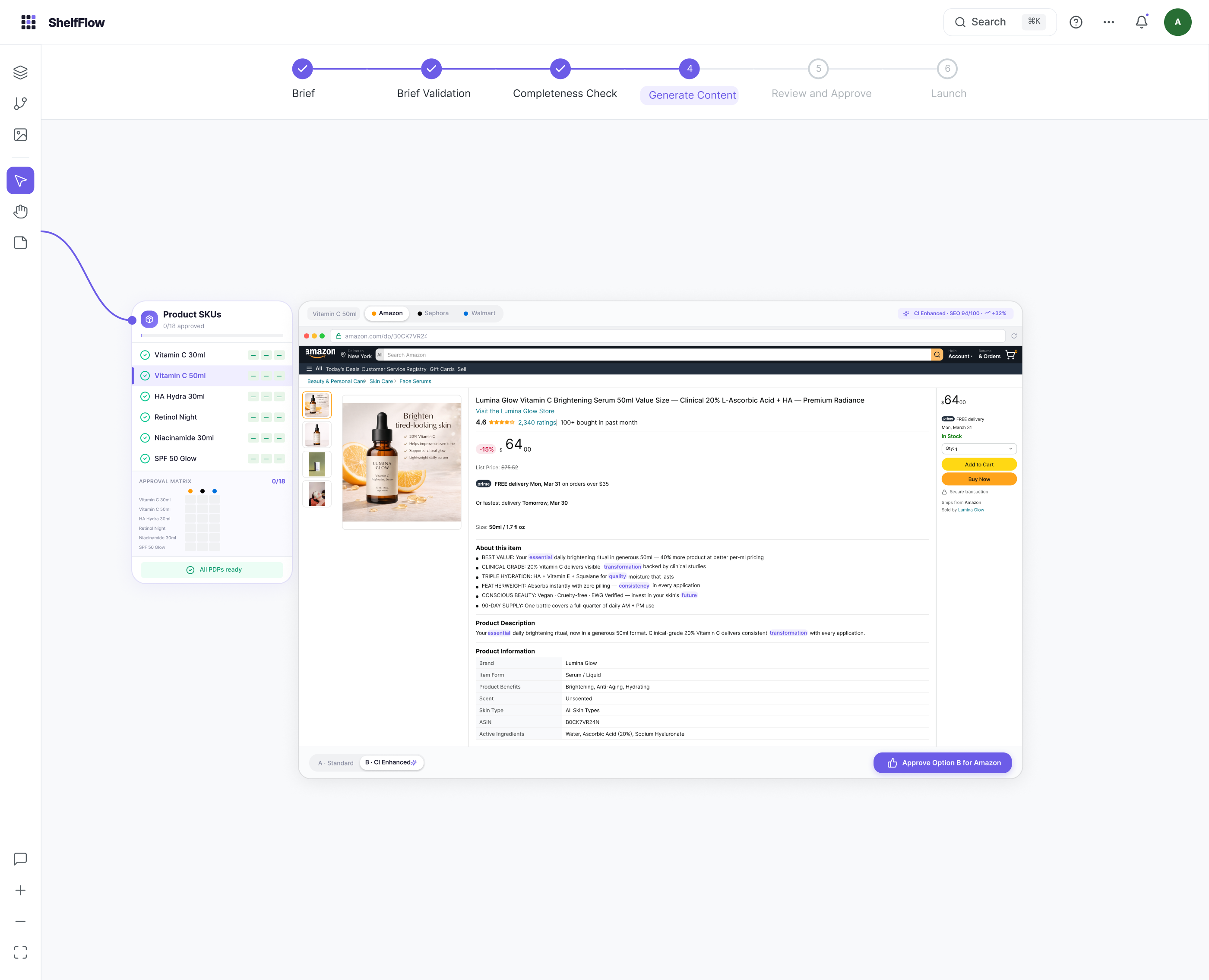

S4 · Generating / A/B variants per retailer Amazon · Full-fidelity PDP preview

Amazon · Full-fidelity PDP preview Sephora · Adapted to retailer format

Sephora · Adapted to retailer format Walmart · Marketplace specs applied

Walmart · Marketplace specs applied Amazon · Enhanced variant (Option B)

Amazon · Enhanced variant (Option B)Same SKU, three retailers, completely different specs. Previews render in each retailer's actual page layout so operators review what the customer will see, not an abstraction.

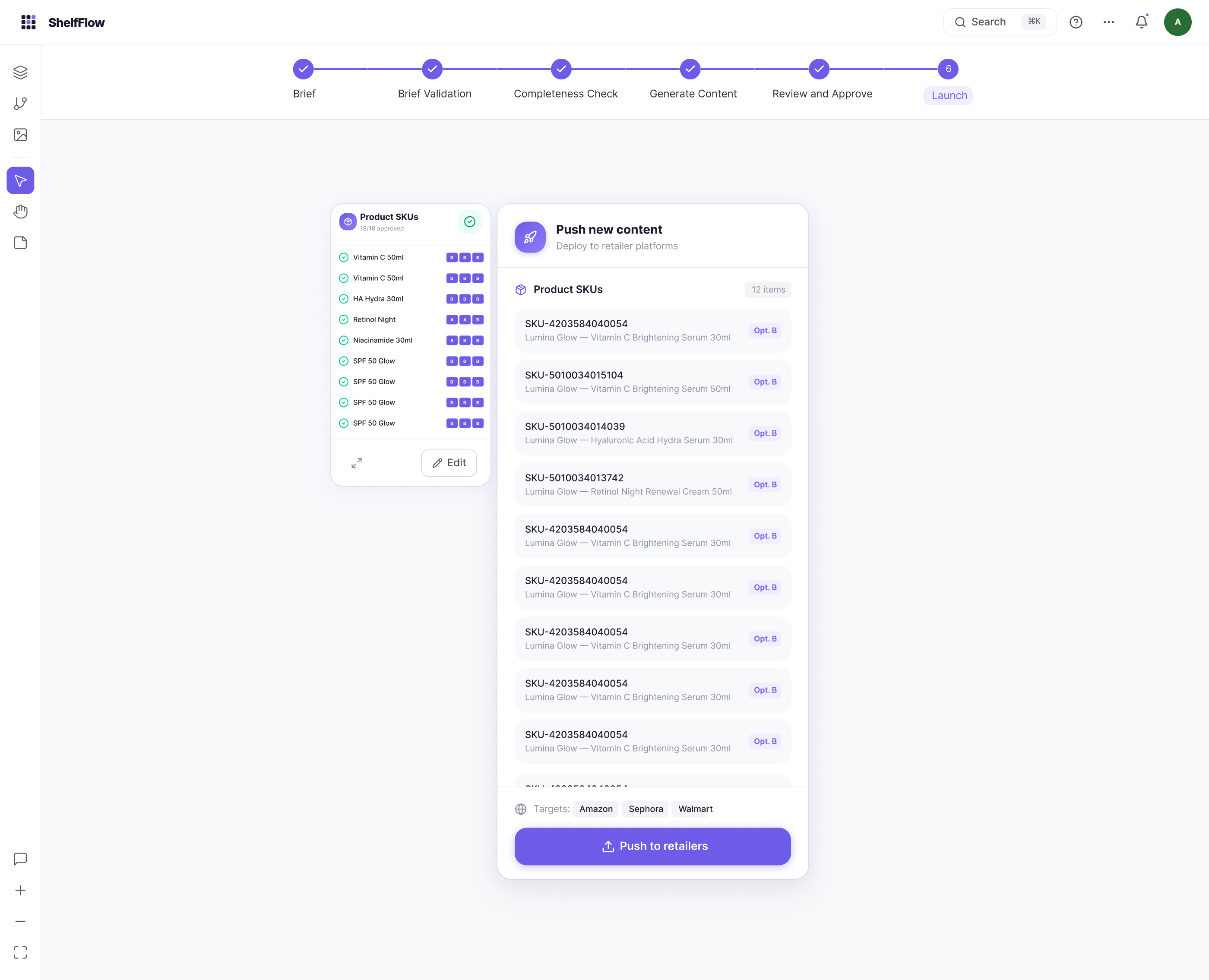

Gate 4: Full matrix review. Per-cell approval across 18 human decisions, every SKU × retailer combination. "Approve for Launch" is the final gate.

S6: Launch. Package assembled, SKU names mapped to retailer IDs, push to retailers via API. Point of no return.

Full user journey mapping. Happy path, branching decisions, rejection loops and recovery flows. Rejection is not an error state. It is a structured feedback loop that routes content back with reviewer notes.

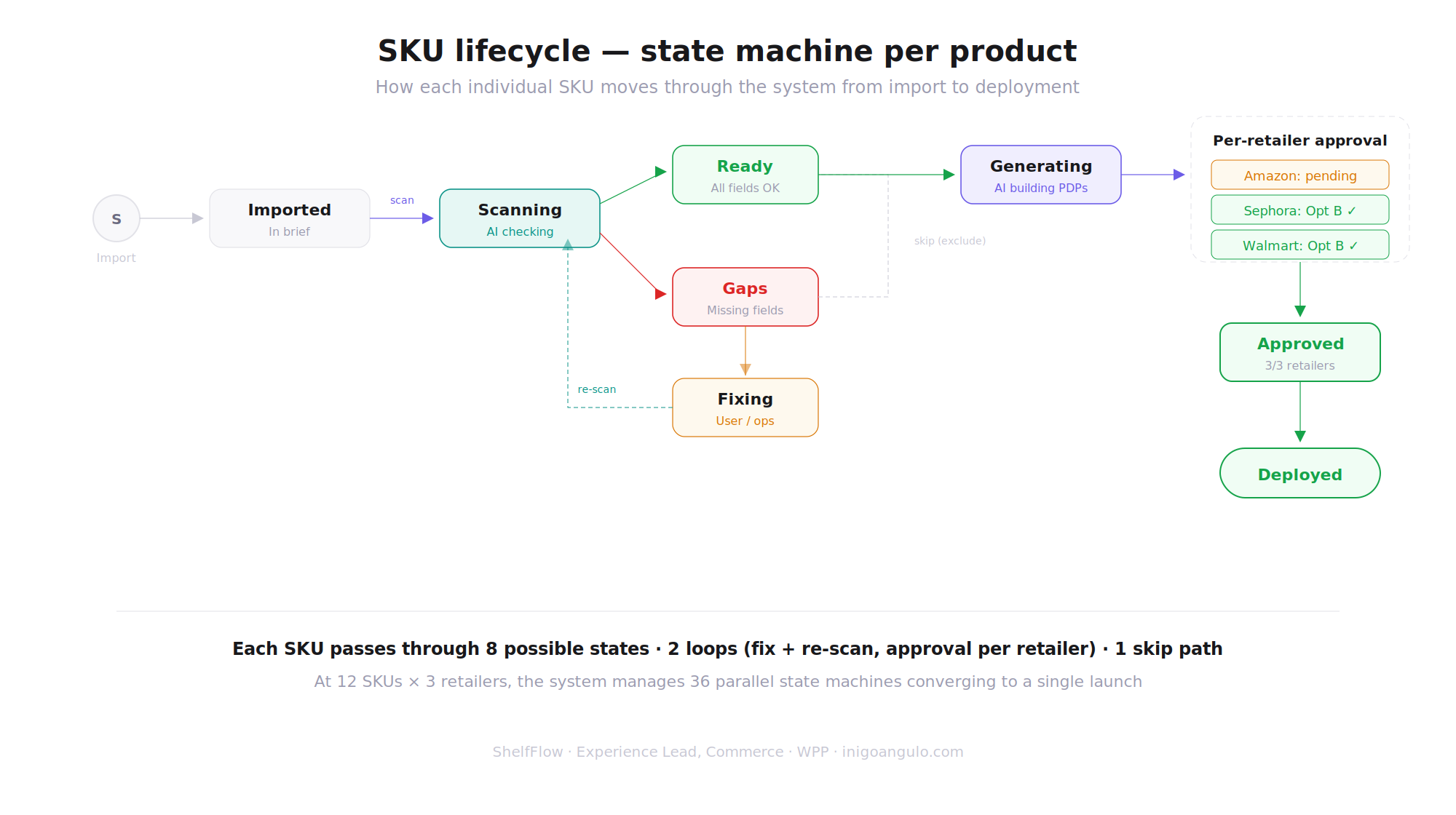

Every content package has a state machine. No package moves without an explicit transition, and no transition happens without a record.

Each of the 36 content packages operates as an independent state machine. States are explicit, transitions are guarded by gate conditions, and every state change is logged with who triggered it and why.

This was a deliberate architectural choice. In the existing workflow, packages got stuck in ambiguous in-between states: "probably approved", "waiting on someone", "I think legal saw it". The state machine eliminates ambiguity. A package is either Draft, In Review, Approved, Published, Rejected, or Blocked. Nothing else. Every transition requires an explicit human action or a system event, and the audit trail is permanent.

Content package states

SKU lifecycle: 8 possible states, 2 loops (fix + re-scan), per-retailer approval. 36 parallel state machines converging to a single launch.

Every design decision came down to the same tension: how fast can we move without losing control over what ships?

In enterprise commerce, shipping wrong content to a retailer is more expensive than a delayed launch. Incorrect claims create regulatory exposure. Mismatched specs trigger portal rejections that delay an entire product line. These aren't hypothetical risks. They're the operational reality that shaped every trade-off in the system.

The decisions below weren't made in a vacuum. Each one responded to a specific failure mode I observed during research, or a structural constraint imposed by the multi-retailer, multi-market reality of the workflow.

| Decision | Chosen | Rejected | Why |

|---|---|---|---|

| AI authority | Draft-only (never ships) | Auto-publish with confidence threshold | Enterprise compliance requires human sign-off on every claim that reaches the shelf. AI that ships autonomously creates regulatory liability. The value of AI here is speed-to-draft, not autonomy. |

| Gate model | 4 mandatory gates, no bypass | Flexible approval chains | Flexibility introduces ambiguity. When a retailer or legal team asks "who approved this?", the system must return one unambiguous answer. Configurable chains make that impossible to guarantee. |

| Package granularity | 1 package = 1 SKU × 1 retailer | Grouped by SKU or by retailer | Retailer specs diverge too much for grouping to be safe. Amazon character limits differ from Sephora's by 40%+. Grouping hides critical differences that cause portal rejections downstream. |

| State machine | Explicit states, logged transitions | Implicit progress tracking | Every state change records who, when, and why. This isn't just UX. It's the audit trail that makes the system legally defensible when a claim is questioned months after launch. |

| Staged generation | Brief → Draft → Adapt → Review | Single-step generation | Generating retailer-adapted content in one pass produces output that looks right but fails spec validation. Staged generation lets operators catch problems at each layer before they compound. |

| Batch operations | Batch approve only after individual review | Unrestricted batch approval | v1 allowed batch-approving packages that hadn't been individually reviewed. Testing revealed operators rubber-stamped to save time. Added a "reviewed" flag requirement. Speed without review is liability. |

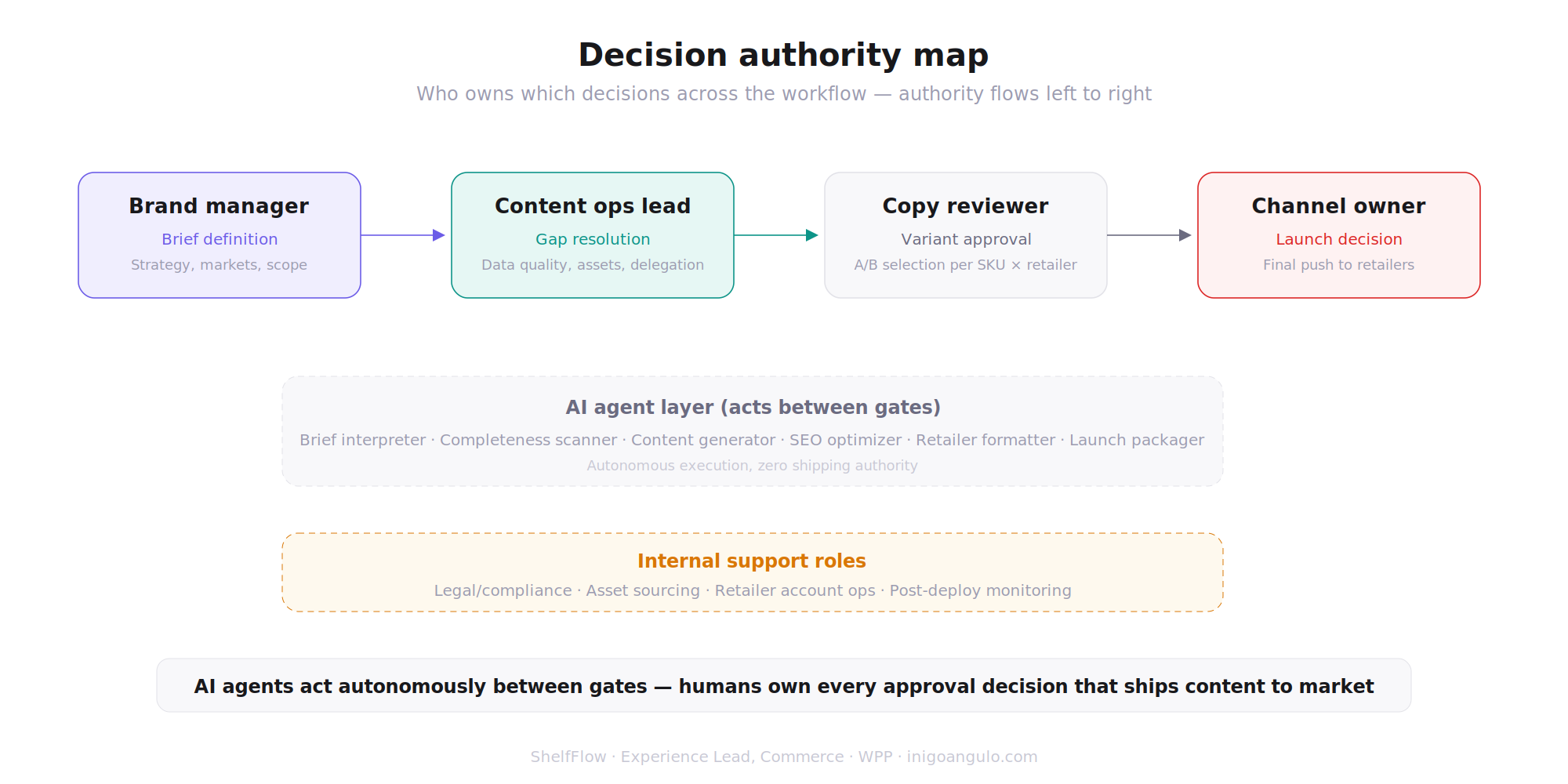

Decision authority map: who owns which decisions across the workflow. AI agents act autonomously between gates. Humans own every approval that ships.

Six AI agents, each with a bounded scope. Every agent accelerates work. None can ship content.

ShelfFlow embeds 6 specialised AI agents across the pipeline. Each agent operates within a clearly defined boundary: it can draft, suggest, check, or flag, but it cannot approve or publish. This distinction is fundamental to the system's design.

The AI layer exists to eliminate the repetitive, high-volume work that made manual workflows collapse at scale: parsing briefs into structured SKU matrices, generating retailer-adapted copy that respects character limits, cross-checking claims against compliance databases, and detecting inconsistencies across 36 parallel content packages. These are tasks where AI creates genuine operational value. The critical decisions, approvals and sign-offs that carry legal and brand accountability, remain with humans.

Embedded AI agents

Every agent drafts or flags. No agent approves. Zero autonomous shipping.

AI agent orchestration. The workflow orchestrator routes tasks to the right agent, schedules generation jobs and enforces gate rules. No agent can bypass a gate.

Human-in-the-loop decision model. Three authority zones: AI acts freely (parse, scan, format), AI proposes (enrichments, variants), human decides (validate, approve, ship). The boundary between zones is the product's trust architecture.

36 packages. 18 approvals. 200+ state transitions. The combinatorial reality that makes manual workflows collapse.

The core complexity of ShelfFlow isn't any single content package. It's the combinatorial explosion when you multiply SKUs by retailers by markets. A "simple" 6-SKU launch across 6 retailers generates 36 unique content packages, each needing at least 3 human touch points.

This is where manual workflows break. Not at package #1, but at package #27, when reviewer fatigue sets in and the differences between Amazon and Walmart specs blur together. ShelfFlow's job is to make package #36 as reviewable as package #1.

The content matrix explosion: 1 brief → 12 SKUs × 3 retailers × 2 variants = 36 content packages → 18 approval decisions → 1 launch.

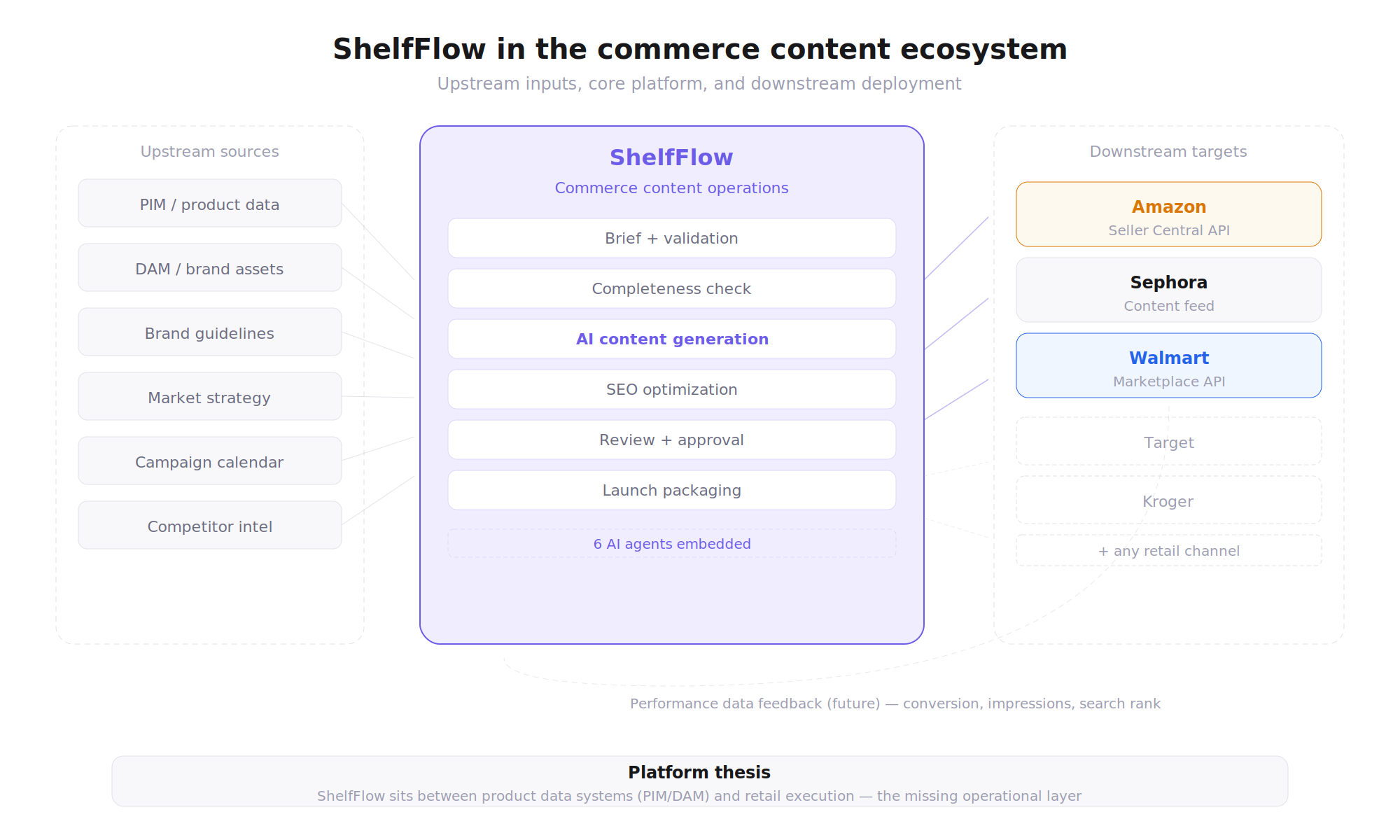

ShelfFlow sits between the brand team and the retailer. It's the coordination layer, not another content tool.

Enterprise content teams don't work in isolation. Product data lives in PIM systems. Brand assets live in DAMs. Compliance rules live in regulatory databases. Finished content ships through retailer portals with their own APIs and validation rules.

ShelfFlow doesn't replace any of these systems. It orchestrates the workflow between them: pulling structured product data at brief intake, validating claims against compliance databases during review, and pushing retailer-formatted packages to distribution portals at launch. The design assumption was that integration points would be messy and unreliable, so the system had to handle partial data, format mismatches and failed API calls gracefully without blocking the entire pipeline.

- Upstream: Product briefs, PIM data, brand guidelines, regulatory databases

- Core: ShelfFlow workflow engine, AI agents, decision gates, state machine

- Downstream: Retailer portals (Amazon, Walmart, Target, etc.), content delivery, audit logs

Integration map. Upstream sources (PIM, DAM, brand guidelines) flow in. Retailer-ready content flows out to Amazon, Sephora, Walmart and other retail channels. ShelfFlow is the coordination layer, not the data layer.

Four roles, clear authority boundaries. The system enforces who can approve what. Process docs don't.

In the existing workflow, anyone with email access could informally "approve" content. That created accountability gaps. When something shipped with an incorrect claim, no one could determine who had actually signed off on it.

ShelfFlow defines four operator roles, each with explicit permissions mapped to specific workflow stages and gate authority. A Content Lead can approve briefs and drafts, but cannot sign off on compliance. A Compliance Officer owns Gate 3, but has no authority over launch. Role boundaries are enforced by the system, not by process documentation or team agreements.

Authority mapping

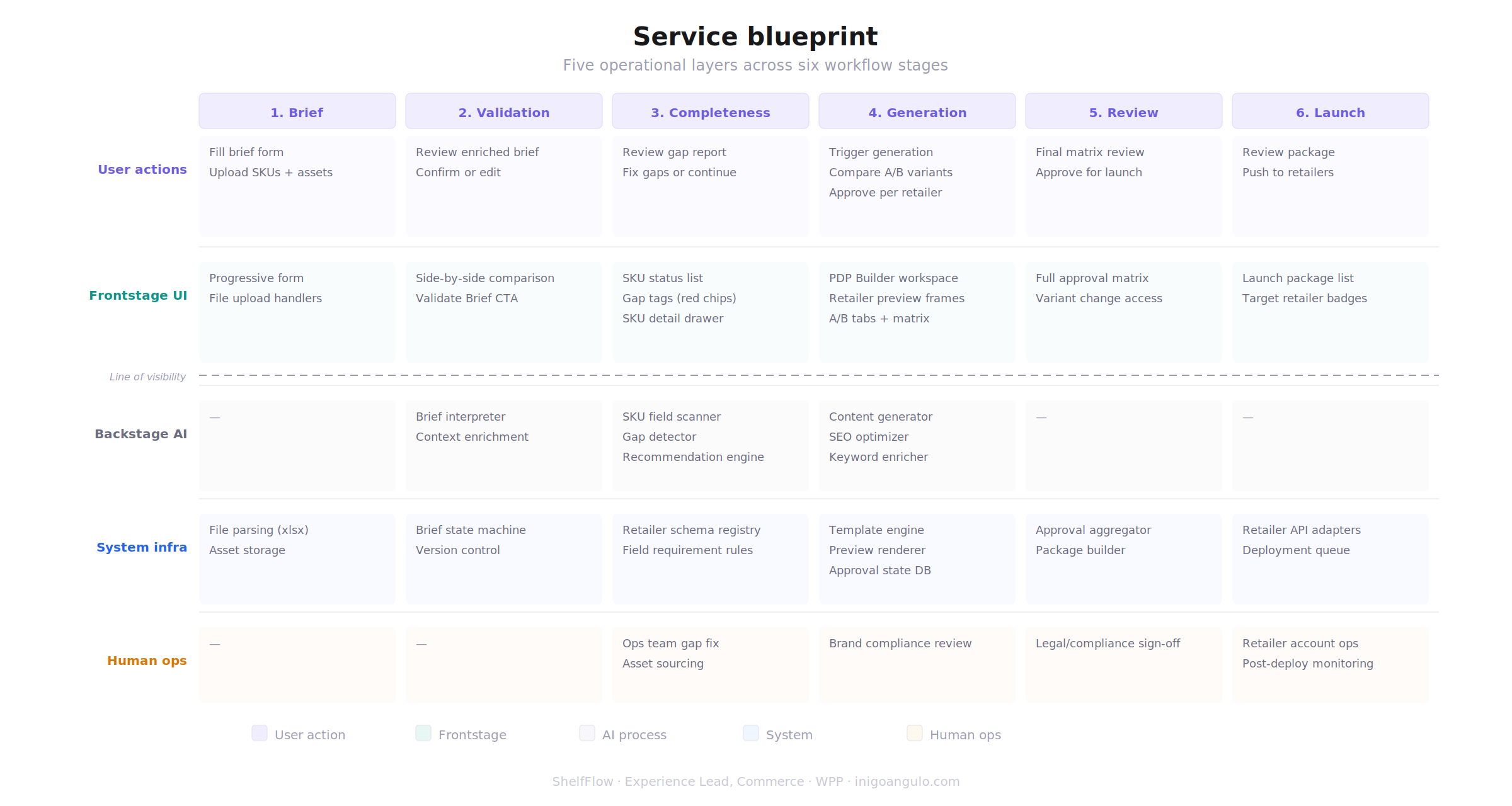

Service blueprint. Five operational layers mapped across all six workflow stages. This diagram drove conversations with engineering about where AI runs server-side vs. where humans interact in the UI.

Five rules that constrained every design decision. Not aspirational values. Operational guardrails.

These principles emerged from research, not from brainstorming. Each one addresses a specific failure mode I observed in existing workflows, or a structural constraint that the system had to respect to work at enterprise scale.

- Keep AI assistive, not autonomous. No AI agent can advance content past a decision gate. AI drafts, suggests and flags. Humans validate, approve and ship. This isn't a philosophical position. It's a compliance requirement in enterprise retail.

- Every state transition must be auditable. If a retailer or legal team asks "who approved this claim?", the system returns a timestamped answer with the approver's name. No ambiguity. No reconstruction from email threads.

- The board is the single source of truth. If it's not on the board, it doesn't exist. No side channels, no email approvals, no Slack-based sign-offs. The system must be the canonical record of what happened and when.

- Design for operational scale, not ideal conditions. Package #36 must be as reviewable as package #1. The interface assumes reviewer fatigue, not reviewer enthusiasm. Urgency signals, comparison tools and batch operations all exist to fight decision quality decay.

- Structure complexity without slowing teams down. 36 packages, 144 gate evaluations, 200+ state transitions per launch. The operator sees what needs attention now. The system absorbs the combinatorial complexity so the human can focus on judgement calls.